“I'm almost ashamed to be known as a DJ”: Dave Clarke on the state of dance music and Archive One

The Baron of Techno on making his seminal album, how he approaches remixes and why ‘90s sounds are back in fashion

MusicRadar's up-to-date news coverage of the latest musical instruments, music equipment, music tech and trade events

The Baron of Techno on making his seminal album, how he approaches remixes and why ‘90s sounds are back in fashion

"You could be the greatest guitar player in the world but a ten-year-old could learn to play that in an hour", he says of the "magic" melodic moments he loves to discover in songwriting

It sounds like Taylor’s been raiding the ‘80s synth department

Betts wrote some of the Allman Brothers' biggest hits, including Ramblin’ Man, influencing a generation of players with a sound that threw all kinds of styles into the pot and made it work

Entry-level devices for rekordbox, Serato, Traktor and more - perfect for beginner DJs

It’s the guitar feelgood story of the year as the Creed/Alter Bridge guitarist is reunited with “prized possession” after his superhero manager Tim Tournier tracked it down for his 50th birthday

Designed to encourage adventurous sonic exploration, MYTH uses machine learning to resynthesize samples into complex oscillators and transform their timbre

“I brought this weird Roland monosynth upstairs. It was an early ’70s primitive synth and we were bugging out over it”

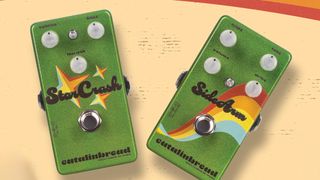

Welcome to the '70s, again, with the StarCrash offering a bias-equipped take on a silicon Fuzz Face and the SideArm a more tweakable version of that lil' green overdrive

Via some riff editing from Lars Ulrich

And a fine Thumpin' Thursday to you, too, as Boss effectively delivers an full pro-quality digital rig for your consideration

The late composer might have been known for his cutting-edge synth use, but he didn't agree with every studio breakthrough

Join us as we gather some of the best studio minds to come up with some incredible insider tips on all aspects of music production

Only one other producer has managed it

Before she became a six-time Brit Award winner in 2024, the British singer wrote for artists including Beyoncé and Rihanna

It seems that the streaming platform could be set to capitalise on the demand for multiple versions of viral hits

Plus, 5 other things we learned from the session ace’s interview with Rick Beato

Transform your voice with Computer Music's June issue – and get a FREE FM synth plugin worth £44