“Where are the famous songs?”: Neil Tennant questions whether Taylor Swift has released 'her Billie Jean'

Shake It Off is “all sung one or two notes going up and down,” he says

MusicRadar's up-to-date news coverage of the latest musical instruments, music equipment, music tech and trade events

Shake It Off is “all sung one or two notes going up and down,” he says

Vigil Of War guitarist joins the Chicago band after an open audition process that attracted 10,000 applications

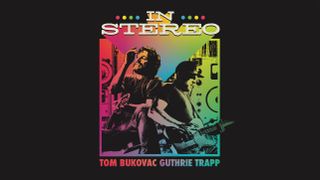

Nashville stringers Tom Bukovac and Guthrie Trapp together on record at last – "We kept a lot of first takes"

As Annie Clark releases her seventh album, Kate Puttick learns why the studio is both her heaven and her hell, and why there’s still a place for drum machines even when you’ve got Dave Grohl to hand

For Collen, working with Lange is like “going to school for your favourite subject” but recreating the studio magic live on Def Leppard tracks such as Live Bites can be very tricky at first

As the guitarist and songwriter goes public with his art, he reveals he's also looking to go back and rework some of the band's songs that Lizzy didn't do justice to in the studio

King checked in with Nashville’s Carter Vintage Guitars and got acquainted with a Les Paul with a “literally perfect” faded Iced Tea finish, and turned an acoustic standout track electric

Our expert pick of reliable headphones to suit any type of DJ, including options from Sennheiser, Technics and Pioneer

Do you know which synth or preset she could have been thinking of?

The IR-D preamp pedal takes those Friedman's high-end amp sounds and puts them in a tube-driven pedal, with dual boosts, onboard IRs, MIDI control and effects loop

Prepping for your first-ever DJ slot? Aside from the music, there’s a few other things you need to consider ahead of time so you can step into the booth with confidence

Xavier de Rosnay and Gaspard Augé lift the lid on their creative process in a new interview with Apple Music's Zane Lowe

After an unfortunate recording accident in the analogue tape days, Quincy Jones needed an 11th hour rescue job and duly made a late night call to the only player who could help

Expand your sound selection with a huge discount on drums, synths, vocals, and loads more - offer ends on 6 May

“A lot of people ask why I don’t use the Neve on her," says Stuart White. "And I love the Neve on vocals, don’t get me wrong, but the Neves tend to crackle when you turn the pre gain”

An Oberheim and Minimoog went for a song, but it was a Martin Gore guitar and E-mu sampler that stole the show

New album Everything Is Here expands Kartell's already diverse sound in new directions, taking cues from ‘70s soft rock and alt hip-hop. We hear more about how the project was made

The next edition of Elektron's widely beloved sampler and drum machine might look similar, but it beefs up the OG's specs considerably

Near-death experiences, breakdowns, talking puppets, heart attacks, sleeping in coffins… Just another Depeche Mode album, then