Best DJ controllers 2024: Hands-on control for Serato, rekordbox, Traktor and more - our expert picks

Hardware DJ devices for PC, Mac and iOS, tested by our experts

MusicRadar's up-to-date news coverage of the latest musical instruments, music equipment, music tech and trade events

Hardware DJ devices for PC, Mac and iOS, tested by our experts

Company plans to develop advanced machine learning algorithms that can automatically detect musical instruments

If the leak is genuine, Elektron's first hardware release in two years would bring stereo sampling, an expanded sequencer, more memory, more effects and more LFOs to the 'takt

Near-death experiences, breakdowns, talking puppets, heart attacks, sleeping in coffins… Just another Depeche Mode album, then

There are plenty of ideas to be found in the art world that could help you mix up your approach to music-making

IK Multimedia's amp, cab and effects modelling power has never been so portable, with the ToneX One capable of storing 20 presets, and accessing thousands of sounds from ToneNET

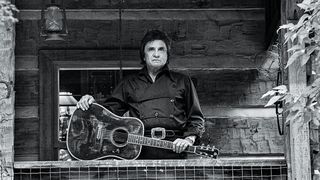

Songwriter is out 28 June and features songs the Man In Black first tracked in '93, produced and restored by son John Carter Cash and David “Fergie” Ferguson in the company of an all-star band

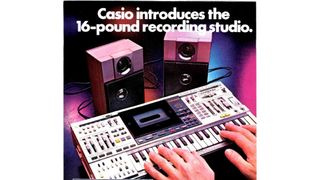

Synth workstation? Karaoke machine? Recording studio in a ghetto blaster? Answer: all - and none - of the above…

For our latest free sample pack, we ran a variety of loops and one-shots through the Casio SK-1, Akai S900 and Bugbrand BugCrusher

Pedal Pawn’s Chris King Robinson conducts an experiment that shows how strange and magical things happen when you dime old tube amps in large, empty spaces

Could it eclipse the $6m bill for Cobain's MTV unplugged acoustic?

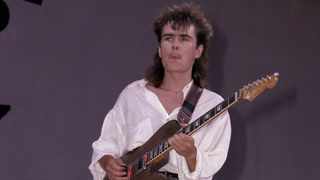

“I remember sitting in the Royal Box watching Queen do their thing and thinking ‘people are going to remember this. This is a bit special’”

A tremolo pedal even John Ford would have been proud to have on his 'board. Inspired by Monument Valley, it has six waveforms, five tap divisions, and quite possibly all the features you need

Blackstar's versatile amp series just got more versatile with the CabRig making its heads and combos a viable recording tool, with size and power options for the stage, studio or home

Tela makes use of modal synthesis, a technique used in physical modelling, to create "otherworldly timbres and textures"

Musical polymath takes issue with bearded superproducer

Classic interview: "The way he had it configured was so that everything acted like one big gigantic speaker"

“I guess we were sort of playing a game to see who could get the furthest behind without getting off beat,” says Saadiq of the recording of D’Angelo’s Lady

Danish house and techno artist Kölsch’s latest album, I Talk To Water, incorporates recordings by his late musician father and explores the emotional weight of grief. We found out more about its conception…